AI Automation for Healthcare: Compliance-Driven Solutions in 2023

Discover how compliance-first design in AI healthcare automation enhances safety and efficiency.

Discover how compliance-first design in AI healthcare automation enhances safety and efficiency.

AI automation for healthcare only creates durable value when compliance is built into the system from day one. A strong healthcare ai automation agency does not start with models -- it starts with risk boundaries, secure infrastructure, human review, and auditable workflows.

I see this mistake often in ai automation for healthcare. Teams evaluate demos before they define HIPAA, HITECH, FDA exposure, access controls, and operational accountability. That is backwards. AI automation healthcare compliance is not a legal checkbox added after launch; it is the architecture. At Imversion Technologies Pvt Ltd, I approach healthcare automation as a system design problem first, because strong architecture defines product success and weak foundations create downstream risk quickly. The real win is not flashy automation. It is safe, measurable throughput improvement across sensitive workflows.

AI automation for healthcare covers a broad spectrum. Some workflows are simple orchestration with rules. Others use AI to extract, summarize, classify, or generate drafts. A smaller set crosses into higher-risk clinical territory, where scrutiny rises sharply. I push teams to separate these categories early, because technology should solve real problems -- not blur risk until nobody owns it.

Operational healthcare workflow automation handles repetitive, high-volume work without making independent clinical decisions. That includes intake processing, eligibility checks, scheduling coordination, referral routing, claims follow-up, document classification, and prior authorization packet assembly.

This is where medical process automation usually starts. The workflow is document-heavy, delay-prone, and expensive to run manually. AI can read forms, extract fields, match records, identify missing information, and draft next actions. But the output should stay constrained. Structured drafts beat unconstrained generation.

Here, the system supports care operations without becoming the clinician. Think note drafting from ambient or structured inputs, coding assistance, lab order routing, discharge summary formatting, and inbox triage. A common pattern I encounter is that teams overestimate the model and underestimate workflow design. The real value comes from reducing navigation, data entry, and handoff friction.

At Imversion Technologies Pvt Ltd, I treat these systems as assistive layers. They recommend, summarize, and prepare. Humans approve.

If AI influences diagnosis, treatment selection, patient-specific medical advice, or functions that resemble Software as a Medical Device, risk changes materially. That does not mean innovation stops. It means controls tighten -- validation, traceability, intended-use definition, and possible FDA considerations all move to the center.

That distinction matters.

Because not every healthcare automation project deserves the same level of autonomy, and pretending otherwise is how organizations create compliance and patient-safety problems.

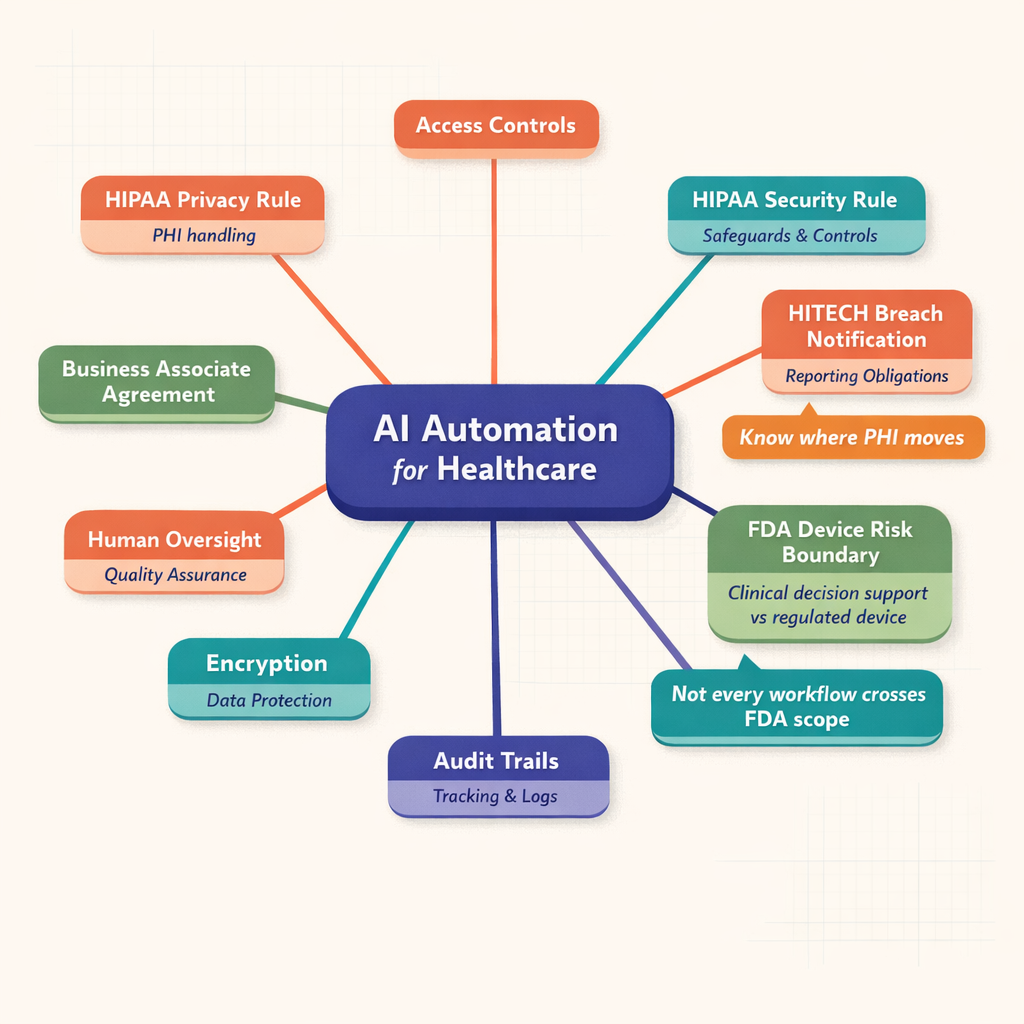

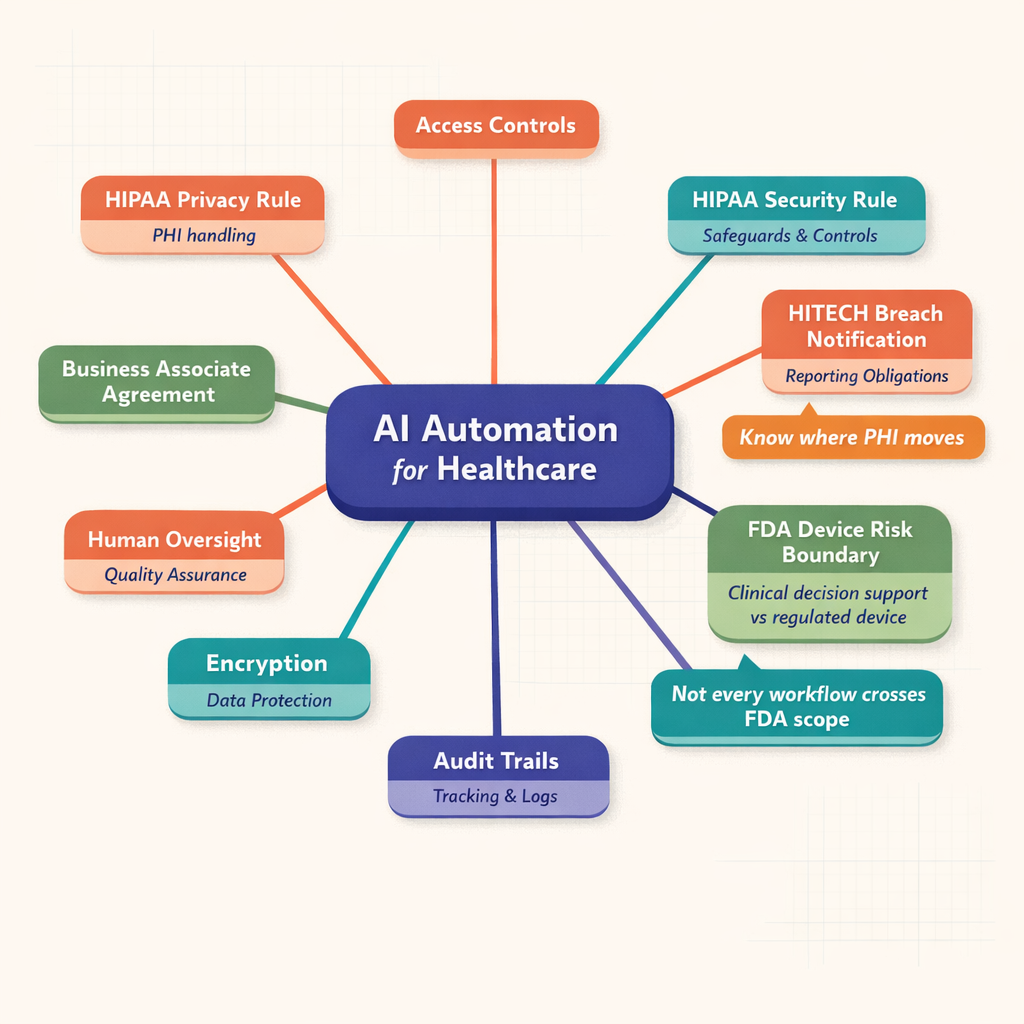

Healthcare buyers do not need to become lawyers. But they do need an actionable understanding of the compliance stack. I frame it this way: HIPAA defines core privacy and security obligations for protected health information, HITECH raises expectations around electronic health information and enforcement, and FDA oversight may apply if the system influences clinical decisions in a regulated way.

Diagram showing a central AI automation for healthcare hub connected to HIPAA Privacy Rule, HIPAA Security Rule, HITECH breach notification, FDA risk boundary, BAA, encryption, access controls, audit trails, and human review controls

Diagram showing a central AI automation for healthcare hub connected to HIPAA Privacy Rule, HIPAA Security Rule, HITECH breach notification, FDA risk boundary, BAA, encryption, access controls, audit trails, and human review controls

HIPAA AI automation has to align with the Privacy Rule, Security Rule, and Breach Notification Rule. The Privacy Rule governs permitted uses and disclosures of PHI. The Security Rule requires administrative, physical, and technical safeguards for electronic PHI. The Breach Notification Rule defines what must happen if protected data is compromised.

For AI automation for healthcare, this means I need to design for minimum necessary access, role-based permissions, auditability, secure transmission, encryption, and operational accountability. According to HHS guidance and industry practice, business associates handling PHI must also meet contractual and security expectations. If a model vendor, orchestration platform, logging system, or storage layer touches PHI, I treat that as a design decision with legal consequences.

HIPAA has been in place since 1996. The Security Rule standards have shaped ePHI controls for years. Breach reporting obligations create real operational pressure. So I never treat HIPAA as abstract policy. It is deployment logic.

HITECH strengthened the practical reality of enforcement around electronic records and breach accountability. It pushed healthcare deeper into digital operations while increasing consequences for poor controls. According to industry reports, enforcement activity and settlement visibility have made covered entities much less tolerant of vague vendor postures.

From an implementation standpoint, HITECH reinforces what I already believe: scalability should be planned early. If a system will process electronic health information at production volume, governance cannot be improvised. At Imversion Technologies Pvt Ltd, I build with logging discipline, access segmentation, and documented workflows from the start because retrofitting governance later is slower and more expensive.

FDA considerations emerge when AI moves beyond administrative support and starts performing functions tied to diagnosis, treatment, or other intended medical use. If a product behaves like Software as a Medical Device, or materially influences a clinical decision, oversight may apply.

I advise teams to ask:

If the answer pattern points toward medical decision influence, I tighten validation, constrain output, and involve regulatory review early. In my experience at Imversion Technologies Pvt Ltd, organizations get into trouble when they describe a tool as “just assistive” while designing it to drive treatment behavior. Labels do not control risk. System behavior does.

A credible healthcare ai automation agency should arrive with safeguards already embedded in its operating model. Not promised later. Present now.

For hipaa ai automation, I start with Business Associate Agreements wherever PHI is involved. If a vendor will process, store, transmit, or meaningfully access PHI, the contract structure matters. I also look for vendors with mature control environments -- SOC 2 is not the whole answer, but it is a useful signal of discipline.

My baseline checklist includes:

I prefer vendors that support regional control, private networking options, and logging configurations that do not leak sensitive content.

This is where ai automation healthcare compliance becomes real. I expect encryption in transit and at rest, RBAC, audit trails, secure key management, environment isolation, PHI-safe observability, and controlled data retention. If production and non-production environments are loosely separated, I stop the conversation.

At Imversion Technologies Pvt Ltd, I usually recommend:

Because systems fail at boundaries, not in diagrams.

So I also insist on operational controls: access reviews, incident drills, change approval, and rollback capability. A pattern I see often is teams investing heavily in model prompts while ignoring key rotation, audit log design, and exception handling. That is the wrong priority stack.

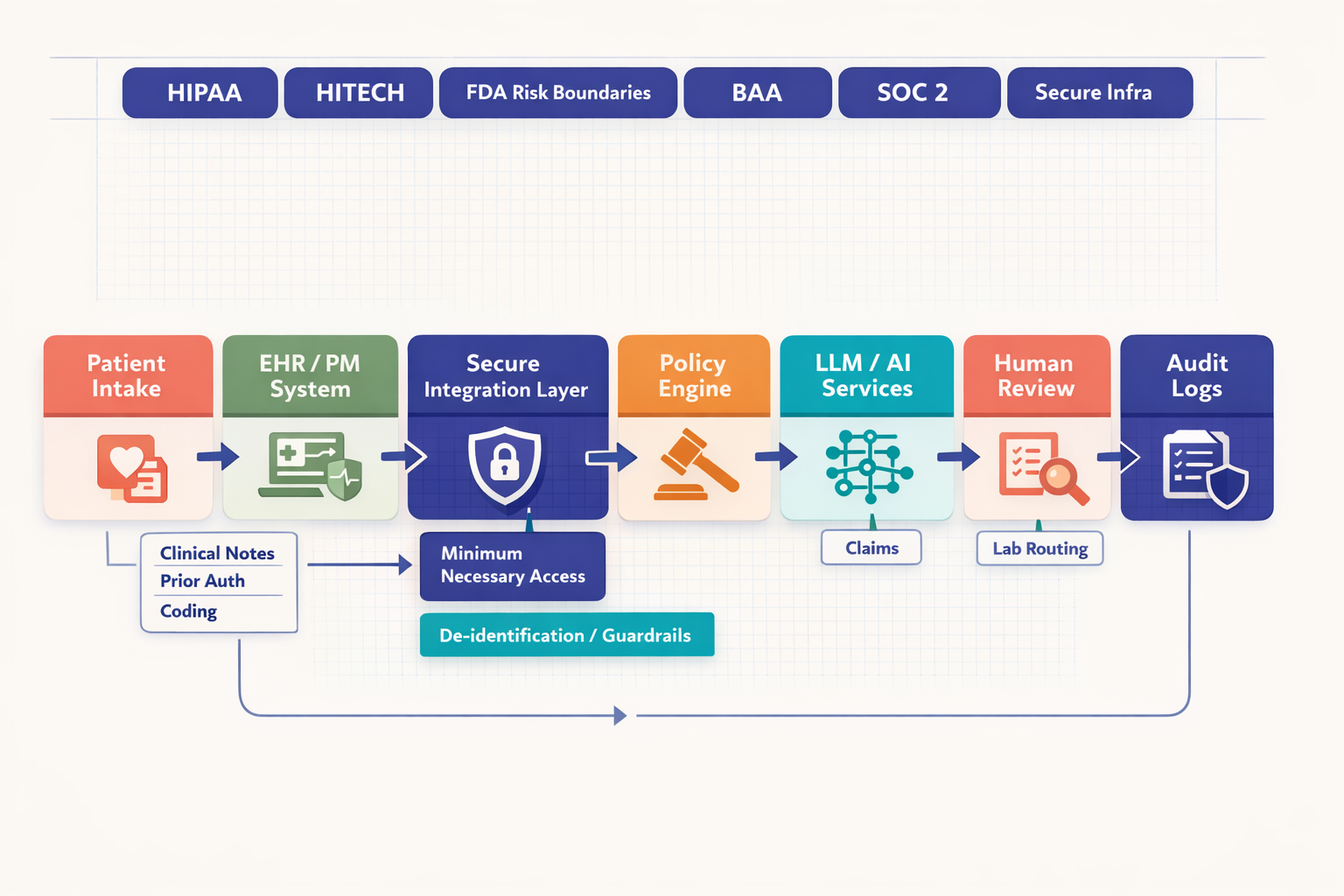

System architecture diagram showing patient intake, EHR, secure integration layer, policy engine, AI services, human review, payer systems, lab systems, and audit logging with HIPAA, HITECH, BAA, and SOC 2 controls called out around the workflow

System architecture diagram showing patient intake, EHR, secure integration layer, policy engine, AI services, human review, payer systems, lab systems, and audit logging with HIPAA, HITECH, BAA, and SOC 2 controls called out around the workflow

The best medical process automation targets repetitive workflows with clear inputs, clear outputs, and costly delays. I focus on places where staff spend time gathering data, re-entering data, chasing documents, or reconciling fragmented systems.

Clinical notes are a strong example. AI can convert ambient or structured inputs into draft notes, summarize encounters, and format documentation for clinician review. The outcome is lower note completion burden and faster chart closure. But human sign-off should remain mandatory, because the note becomes part of the legal and clinical record.

Prior authorization is another high-value target. AI can collect chart elements, identify payer requirements, assemble a submission draft, flag missing evidence, and route exceptions. That improves turnaround and reduces staff swivel-chair work. Compliance concerns center on PHI handling, payer-specific rule changes, and maintaining staff review before submission.

Coding support fits well too. AI can propose codes from documentation, identify gaps, and surface justification snippets. The business benefit is faster throughput and fewer omissions. Still, coding teams should approve final output, especially where reimbursement risk or clinical specificity is high.

Intake automation is often underestimated. It can extract demographics, insurance details, consent data, and referral information from forms or uploaded documents, validate fields, and send exceptions to staff. That improves front-desk efficiency and reduces registration errors. Because intake touches sensitive identity and insurance data, access control and retention policy matter immediately.

Lab routing, scheduling, and claims follow the same pattern. AI can classify orders, match routing logic, suggest scheduling slots, predict no-show risk, prioritize reminders, organize claim status follow-up, and draft appeal support. But approval remains important where errors could delay care, disrupt patient communication, or create billing disputes.

Comparison table showing healthcare automation use cases including clinical notes, prior authorization, medical coding, patient intake, lab routing, scheduling, and claims processing with columns for primary benefit, compliance considerations, human review needs, and implementation complexity

Comparison table showing healthcare automation use cases including clinical notes, prior authorization, medical coding, patient intake, lab routing, scheduling, and claims processing with columns for primary benefit, compliance considerations, human review needs, and implementation complexity

| Use Case | Value | PHI Sensitivity | Human-in-the-Loop Need | Implementation Complexity |

|---|---|---|---|---|

| Clinical note drafting | Faster documentation, reduced clinician admin time | High | High | Medium |

| Prior authorization assembly | Faster submission prep, lower admin burden | High | High | High |

| Coding assistance | Better throughput, fewer missed codes | High | High | Medium |

| Digital intake | Fewer registration errors, faster onboarding | High | Medium | Medium |

| Lab routing | Faster order handling, fewer manual handoffs | High | Medium | Medium |

| Scheduling automation | Better utilization, lower no-show rates | Medium | Medium | Low-Medium |

| Claims follow-up | Faster status handling, fewer delays | High | Medium-High | Medium |

The pattern is simple. Use AI to draft, classify, summarize, route, and prioritize. Keep humans in approval roles where financial, clinical, or legal consequences are significant. That is how I build healthcare workflow automation that scales without losing control.

Architecture is where good intentions meet production. At Imversion Technologies Pvt Ltd, I design ai automation for healthcare as a layered system -- data flow, model layer, control layer. That separation keeps risk manageable and operations clear.

I prefer EHR-connected workflows through secure APIs, not brittle exports. Queue-based processing helps isolate failures and control throughput. Document ingestion pipelines should classify files, extract metadata, apply redaction where needed, and route tasks to the right services.

For healthcare workflow automation, retrieval-augmented generation works best when the retrieval corpus is approved, versioned, and context-bounded. I do not want a model guessing from memory when it can ground outputs in policy documents, payer rules, or internal templates.

The safest model layer is the one that matches the task and exposure level. For deterministic routing, validation, and eligibility checks, rules and traditional automation often beat generative AI. For summarization, extraction, and draft generation, I use models behind compliant vendor endpoints or private hosting where the risk profile demands tighter control.

That answer is not glamorous. It is correct.

Hipaa ai automation should not default to a general-purpose model for every job. I use the smallest reliable capability that solves the problem.

The control layer carries the real governance load: approval workflows, audit logging, observability, version control, fallback logic, and exception management. I want every sensitive action traceable -- who triggered it, what data was used, what model version responded, what rule fired, and who approved the output.

A strong pattern includes:

Because execution matters more than ideas, I build for recoverability. Systems will hit bad documents, malformed payloads, stale payer logic, and edge-case language. Observability is not optional. Neither is a safe failure path.

Compliance does not end at go-live. It starts there. Payer rules shift, access patterns drift, vendors update terms, and internal policies change. So I treat ai automation healthcare compliance as an ongoing operating function: prompt and model version logs, drift checks, periodic access reviews, vendor reassessment, workflow audits, breach response readiness, and controlled change approval.

A healthcare ai automation agency should be able to work with compliance, IT, and operations in the same room -- and speak clearly to all three. I would evaluate an agency on a few non-negotiables:

Numbered flowchart showing regulatory update tracking, policy review, model testing, security validation, output monitoring, retraining, audit reporting, and vendor review, alongside a checklist for evaluating a healthcare AI automation agency

Numbered flowchart showing regulatory update tracking, policy review, model testing, security validation, output monitoring, retraining, audit reporting, and vendor review, alongside a checklist for evaluating a healthcare AI automation agency

I am Sagar Hebbale, and this is exactly how I think about automation at Imversion Technologies Pvt Ltd. In my experience, the right partner does not sell autonomy first. I sell controlled throughput, measurable operational improvement, and secure implementation. That is the difference.

AI automation for healthcare works when compliance is the product, not a patch. If I am choosing a partner, I want one that can prove it in architecture, governance, and execution -- every single time.

The safest starting point is a bounded administrative workflow with clear inputs, clear outputs, and mandatory human review. Good first projects include intake classification, document routing, eligibility support, and prior authorization packet preparation because they can deliver measurable efficiency gains without giving the system independent clinical authority.

AI automation for healthcare usually expands the scope of vendor due diligence because insurers and security teams want proof of data handling boundaries, logging, access controls, and incident response readiness. An organization should expect underwriters and procurement teams to ask whether PHI touches model providers, subprocessors, analytics tools, or support workflows.

A healthcare ai automation agency should define metrics early so the organization can judge whether automation improves throughput without increasing denials, rework, privacy risk, or staff burden. Useful measures include turnaround time, exception rate, manual touch count, approval latency, audit completeness, and downstream error impact.

Hipaa ai automation does not require storing every output indefinitely; it requires a defensible retention policy tied to operational, legal, and audit needs. Organizations should keep only the records necessary for traceability, compliance, and dispute resolution, while deleting transient data on a controlled schedule to reduce unnecessary exposure.

Teams should treat model drift as an operational risk that needs routine monitoring, threshold alerts, periodic revalidation, and rollback options. The practical approach is to compare current outputs against approved benchmarks, review exception trends, and pause or constrain automation when accuracy, formatting, or policy alignment starts to decline.