AI Automation Agency Checklist: 15 Essential Questions for 2024

Evaluate potential partners to secure effective AI automation solutions.

Evaluate potential partners to secure effective AI automation solutions.

The best ai automation agency checklist helps me cut through sales polish and see whether an agency can actually deliver secure, scalable automation. If I plan to hire ai automation agency support, I need proof across technical fit, execution discipline, and commercial clarity.

I have seen buyers focus too much on demos and too little on architecture, integrations, support, and ownership terms. That is where expensive mistakes begin. In this guide, I break down the most practical questions to ask ai agency teams before signing, group them into three evaluation areas, and show how I would score answers. I also cover the red flags that usually signal a weak partner long before implementation starts.

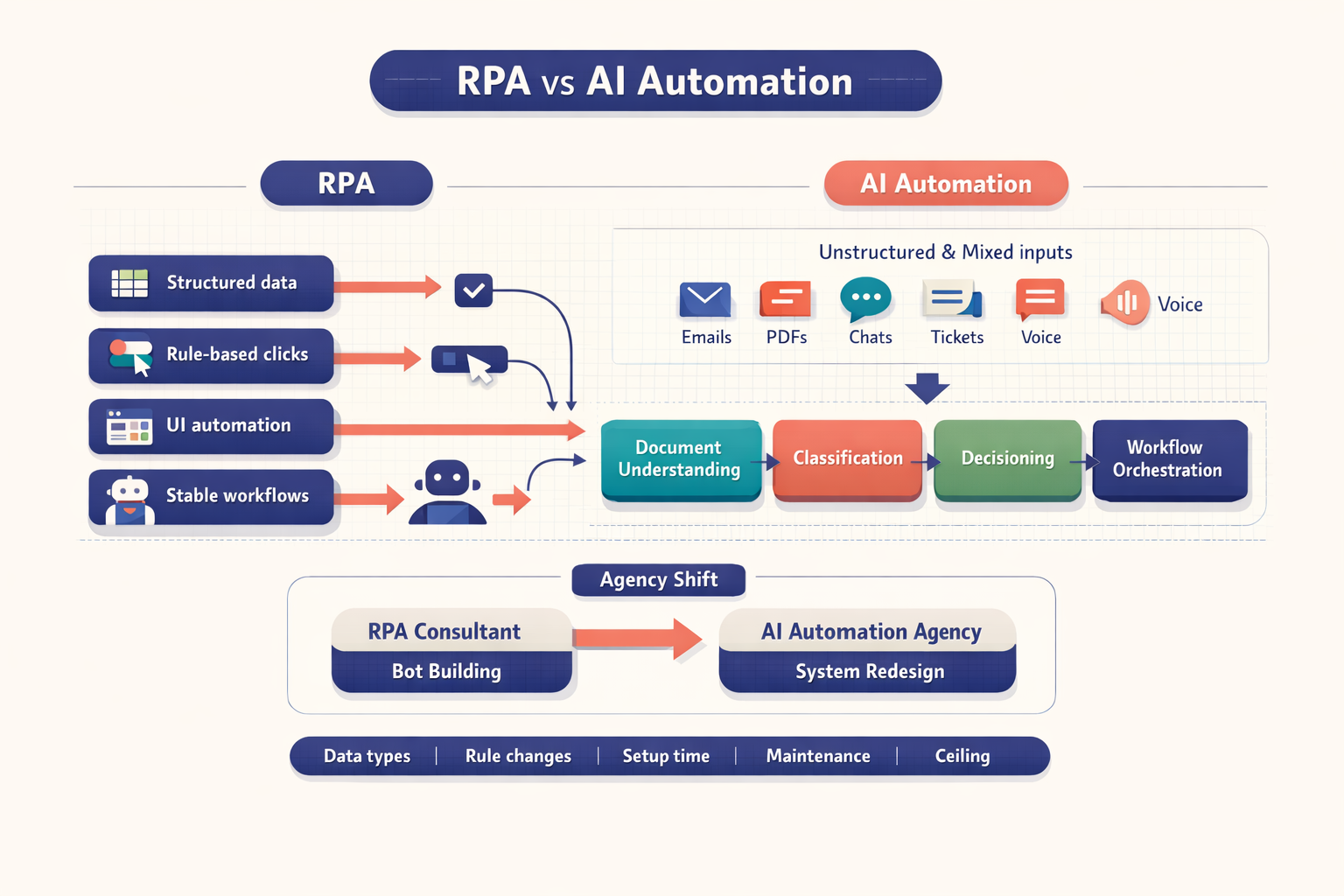

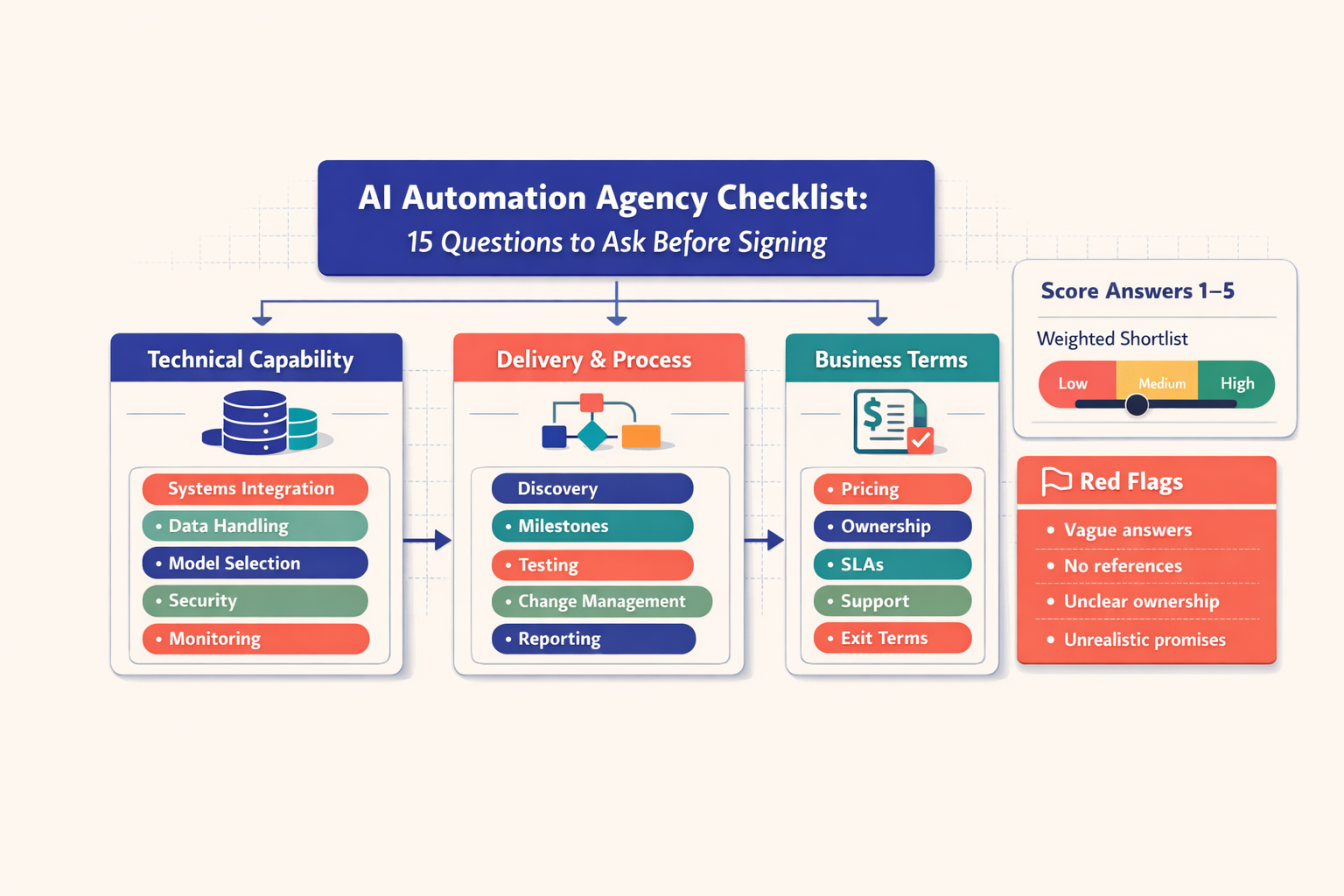

Wide checklist diagram showing three evaluation columns for technical capability, delivery process, and business terms, alongside a 1-to-5 scoring panel and a boxed list of agency red flags

Wide checklist diagram showing three evaluation columns for technical capability, delivery process, and business terms, alongside a 1-to-5 scoring panel and a boxed list of agency red flags

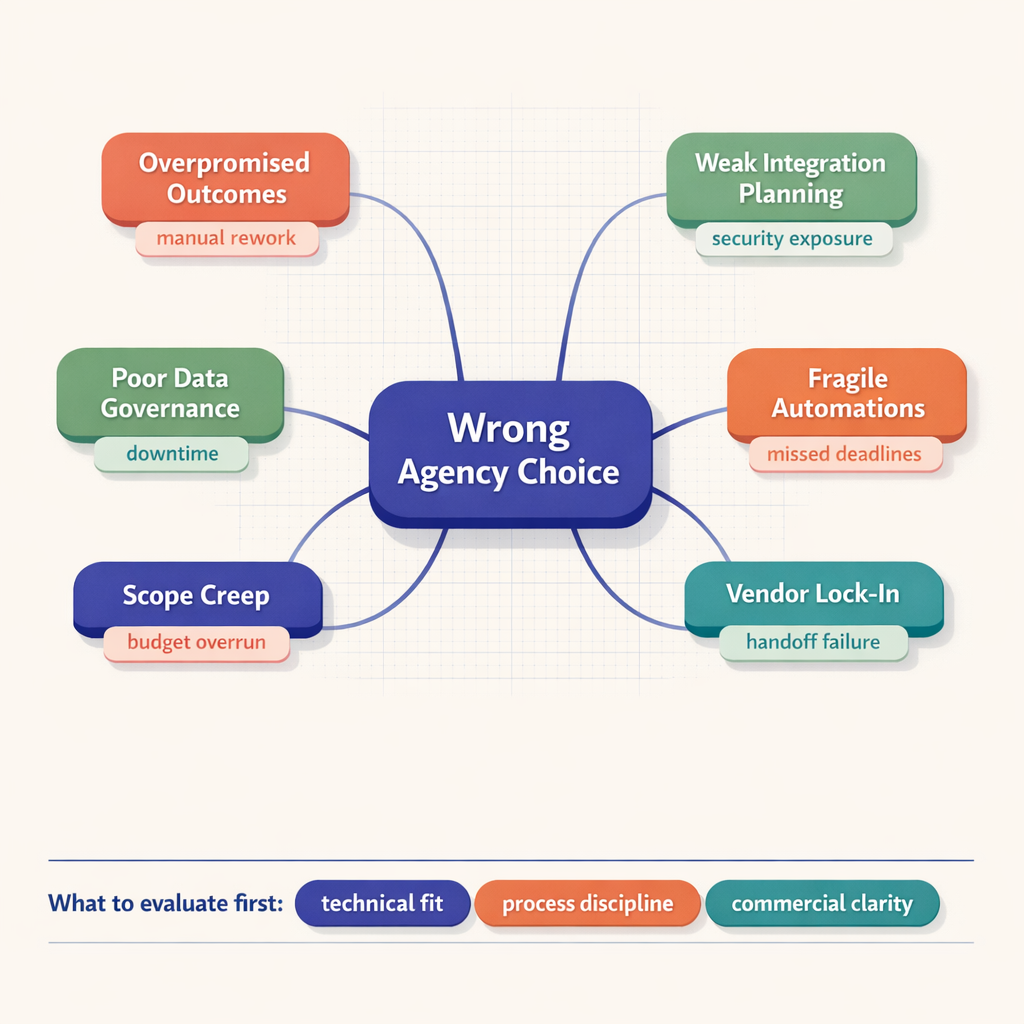

Choosing the wrong partner does not just create a bad software project. It creates an operations problem.

When I talk to teams preparing to hire ai automation agency support, I usually see the same trap: they buy the promise of transformation before they inspect the mechanics of delivery. That is backwards. AI automation touches workflows, data access, approval chains, customer interactions, and internal accountability. If the agency gets those wrong, the business pays for it.

A weak partner tends to overpromise early. They say yes to every use case. They pitch full automation where assisted automation is the better design. They ignore brittle edge cases. Then production starts, integrations fail, the workflow needs human review, and nobody planned for that reality. The automation ends up half-used, or worse, creates new manual work.

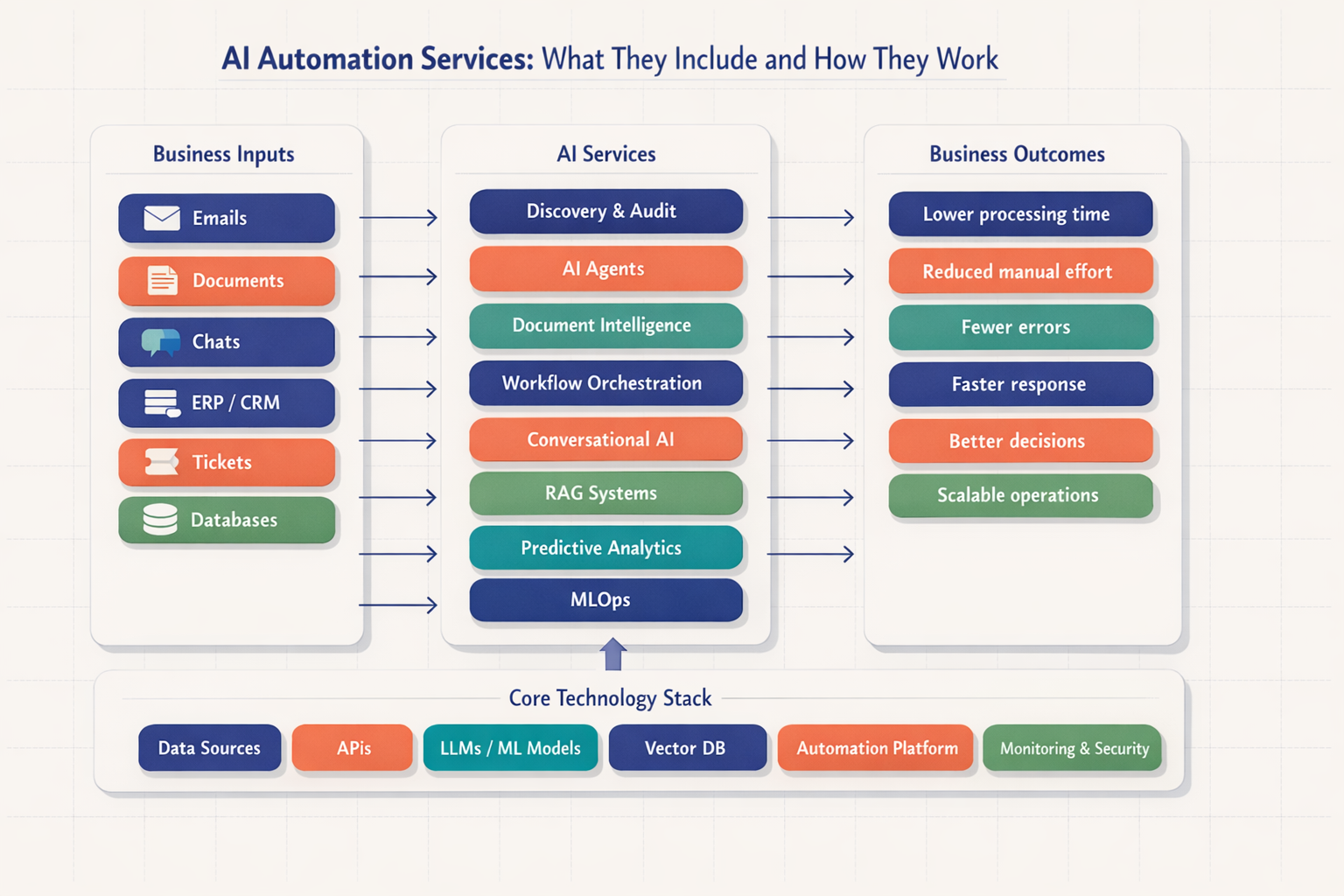

Diagram with a central 'wrong agency choice' node connected to six operational risks such as scope creep, vendor lock-in, fragile automations, and weak integration planning, each labeled with business impact

Diagram with a central 'wrong agency choice' node connected to six operational risks such as scope creep, vendor lock-in, fragile automations, and weak integration planning, each labeled with business impact

According to industry reports, a large share of AI initiatives stall before broad adoption, and software transformation programs frequently overrun on time and cost because process change was underestimated. I do not need a precise percentage to know the pattern is real -- I see it reflected in how often buyers inherit fragmented tools, undocumented logic, and no post-launch accountability.

At Imversion Technologies Pvt Ltd, a common pattern I encounter is that the real risk is not model quality alone. It is poor system design around the model. Weak security practices, loose prompt handling, no role-based access, and vague compliance language become serious issues fast if sensitive workflows touch GDPR, HIPAA, or SOC 2 environments.

And then come the hidden costs.

Unused licenses. Rebuilds. Vendor lock-in. Retraining staff. Reworking prompts and integration logic. Paying another team to stabilize what should have been designed properly from day one. That is why I tell buyers to evaluate ai automation partner options like they are selecting an operations layer, not buying a demo.

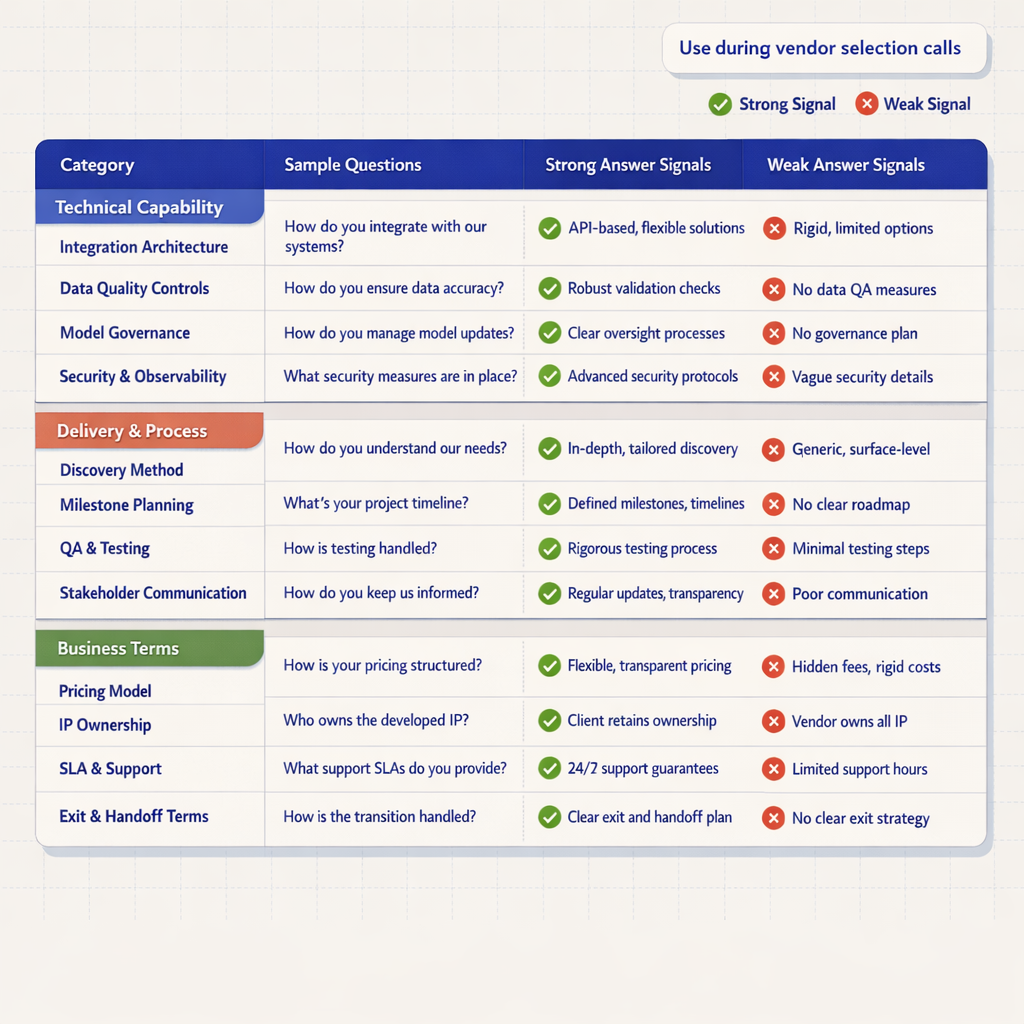

I want industry relevance, not broad AI theater. A capable agency should explain which workflows they have automated in similar environments and where the constraints usually appear.

I look for specifics about process types -- support triage, sales qualification, invoice extraction, knowledge assistants, internal copilots, compliance review, or workflow orchestration. Good agencies explain why domain context matters and where human review still needs to remain in place.

“AI works in every industry” is not a real answer. Neither is a list of unrelated use cases with no mention of data structure, exception handling, or operational constraints.

This question reveals whether the agency understands architecture or just resells one stack. I expect a clear explanation of why they choose OpenAI, Anthropic, Azure OpenAI, AWS Bedrock, open-source models, vector databases like Pinecone, and orchestration layers based on the use case.

A strong partner can compare tradeoffs -- cost, latency, governance, hosting requirements, context length, and retrieval patterns. They should also explain where they use Zapier, Make, n8n, custom APIs, or traditional backend services instead of forcing everything through a no-code layer.

If the answer is basically “we use one platform for everything,” I get cautious. Stack monoculture usually points to design shortcuts.

Integration depth decides whether automation becomes operational or ornamental. If an agency cannot speak confidently about Salesforce, HubSpot, Slack, Zendesk, SAP, NetSuite, SharePoint, custom APIs, webhooks, and authentication patterns, I assume the real implementation risk is still hidden.

I want to hear about API-first design, middleware choices, retry logic, rate limits, logging, fallback handling, and permission models. Good agencies discuss what they do when systems are poorly documented or when a client has legacy dependencies.

“We can connect anything” without mentioning authentication, data mapping, or failure handling is just sales language.

This is one of the most important questions to ask ai agency teams. If they process sensitive data, I need clarity on storage, retention, encryption, access controls, auditability, and vendor-level compliance expectations.

I expect specifics: encryption in transit and at rest, role-based access control, environment separation, secrets management, logging policies, PII masking, and guidance for GDPR, HIPAA, or SOC 2-aligned implementations. If relevant, they should explain where data is processed and whether prompts or outputs are retained by upstream model providers.

If they say “our provider is secure” and stop there, that is a weak answer. Security is not outsourced by naming the platform.

At Imversion Technologies Pvt Ltd, I have learned that buyers often discover too late that security was treated as a checkbox instead of an architecture requirement. That mistake gets expensive fast.

Any serious ai automation agency checklist must include this question. AI systems fail in subtle ways. I do not trust anyone who only talks about prompt quality and ignores system-level validation.

I want to hear about benchmark datasets, scenario testing, retrieval evaluation, confidence thresholds, human-in-the-loop review, prompt versioning, fallback logic, guardrails, and production monitoring. Good partners define acceptable error rates by use case. An internal drafting assistant can tolerate more ambiguity than an automated compliance workflow.

If the testing story is “we manually try some prompts before launch,” that tells me the agency is not building for reliability. Reliability is the product.

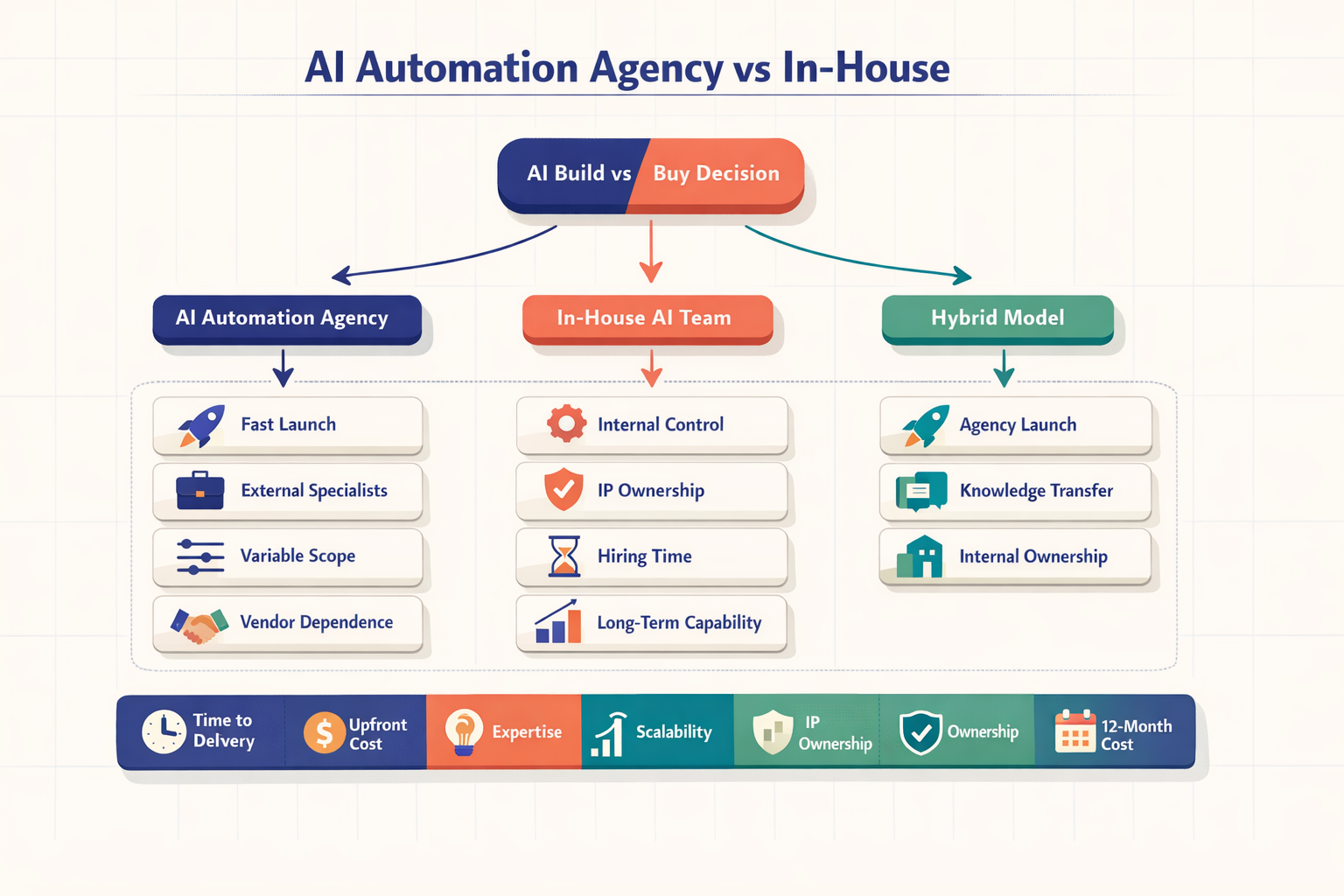

Three-part evaluation table showing technical, process, and business question categories with sample agency questions, strong answer signals, and weak answer signals in parallel columns

Three-part evaluation table showing technical, process, and business question categories with sample agency questions, strong answer signals, and weak answer signals in parallel columns

A disciplined agency scopes outcomes, dependencies, constraints, and measurable KPIs before build work starts. I want metrics tied to the business -- response time reduction, ticket deflection, labor hours saved, lead-routing speed, case resolution time, or document turnaround.

Discovery should uncover process owners, exceptions, approval paths, source systems, and failure points. If an agency skips this and jumps into tooling, they are guessing. At Imversion Technologies Pvt Ltd, I see stronger outcomes when workflow mapping happens before model selection, not after.

I prefer agencies that can explain when to run a 4- to 6-week pilot and when a broader rollout makes sense. Strong teams define pilot scope, success criteria, rollback plans, and the handoff path into production. Weak teams sell maximum scope on day one.

This matters more than buyers admit. I want to know whether senior architects stay involved, who owns project management, how often status reviews happen, and how risks are escalated. Weekly check-ins, written updates, decision logs, and documented blockers tell me the agency has a system. Random calls do not.

Post-launch support separates a real delivery partner from a build-and-disappear shop. I expect monitoring, issue triage, prompt and workflow tuning, documentation, user training, and defined ownership for optimization. A mature team talks about alerting, KPI review cadence, and support windows. An immature team says the system should just run.

Because execution matters more than ideas.

In my experience, agencies that deliver consistently do not sound dramatic. They sound operational. They explain phases clearly: discovery, architecture, implementation, testing, pilot, rollout, monitoring. They define who signs off at each stage. They document assumptions. They know where projects slip -- usually in unclear ownership, delayed access, or shifting scope -- and they plan around that. That level of process discipline is what I look for before I hire ai automation agency support for anything business-critical.

For smart ai automation vendor selection, I need clarity on fixed fee, retainer, usage-based, or hybrid pricing. I also ask what is not included -- model usage, third-party licenses, vector database costs, cloud hosting, support, retraining, or future changes. Hidden exclusions distort total cost.

This question protects flexibility. I want explicit ownership language for deliverables, configuration assets, and operational documentation. If the agency keeps core assets proprietary without a good reason, I see lock-in risk.

Scope always changes. Good agencies define change request process, pricing triggers, turnaround expectations, and approval flow. Weak agencies stay vague, then turn every adjustment into a commercial surprise.

I look for practical commitments: response times, severity definitions, uptime targets where relevant, escalation paths, and support coverage by timezone. If the automation affects revenue or customer operations, support terms should reflect that reality.

This is one of the most overlooked ways to evaluate ai automation partner options. I ask what happens if I terminate, switch vendors, or move the system in-house. I want transition documentation, access handover, and a clear offboarding process. At Imversion Technologies Pvt Ltd, I believe strong architecture defines product success -- and part of strong architecture is making sure the client is never trapped.

I use a simple 1-to-5 score for each question in this ai automation agency checklist.

1 means vague, evasive, or unsupported.

3 means acceptable but shallow.

5 means specific, evidence-based, and operationally credible.

That gives me a total out of 75 across all 15 questions. My practical scoring guide is:

I also weight some categories more heavily during ai automation vendor selection. Security, compliance, integration depth, and reliability testing deserve extra weight because failure there creates the largest downstream cost. If two vendors score similarly, I compare written proposals against live-call answers. Mismatches matter. A polished deck can hide delivery weakness; spontaneous technical clarity rarely does.

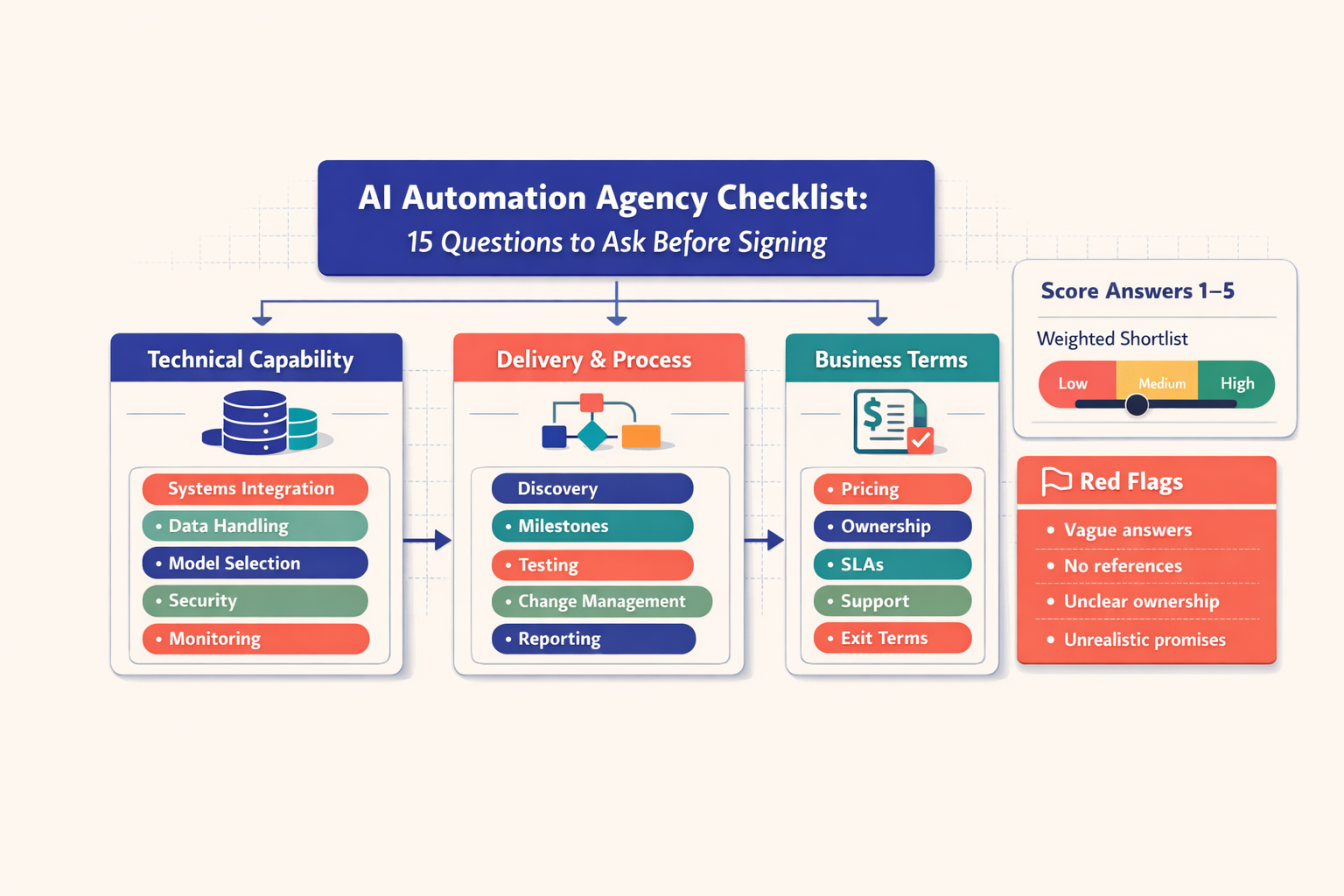

Five-step scoring workflow graphic with a 1-to-5 rating legend, highlighted red flag labels, and shortlist comparison bars showing Agency A at 72, Agency B at 61, and Agency C at 84

Five-step scoring workflow graphic with a 1-to-5 rating legend, highlighted red flag labels, and shortlist comparison bars showing Agency A at 72, Agency B at 61, and Agency C at 84

I also keep notes on confidence level. Not sales confidence. Evidence confidence.

During any ai automation agency review, I watch for patterns, not isolated phrases.

Red flags include:

Sagar Hebbale here -- and my advice is simple. Technology should solve real problems. If an agency cannot explain how it will integrate, govern, test, support, and transfer what it builds, I do not care how polished the pitch sounds.

A common pattern I see in the second half of vendor evaluations is buyer fatigue. People start compromising because one agency felt enthusiastic. Bad move. The right way to evaluate ai automation partner options is to compare evidence-backed answers, score them consistently, and choose the team that can execute with clarity. Ideas are easy. Delivery is the difference.

The best way to use an ai automation agency checklist is to turn it into a structured interview scorecard before calls begin. Ask the same core questions to every agency, require written follow-up for vague answers, and score responses immediately after each meeting so impressions do not replace evidence.

Comparing three to five agencies is usually enough to reveal meaningful differences in technical depth, process maturity, and pricing logic. Fewer than three can limit perspective, while too many can create decision fatigue and make it harder to evaluate ai automation partner options consistently.

An ai automation agency checklist should include training questions because adoption failure often comes from users, not tools. Agencies that provide admin training, escalation guidance, and operating documentation reduce dependence on their team and increase the odds that automations keep delivering value after launch.

AI automation vendor selection becomes more rigorous in regulated industries because technical capability alone is not enough. Buyers should verify data residency, audit logging, access controls, model governance, and incident response expectations early, since compliance gaps can block deployment even when the automation itself works.

If two agencies score nearly the same, ask for a small paid discovery sprint, a sample architecture outline, or a pilot plan tied to one workflow. This reveals how each team thinks under real constraints and often exposes the stronger delivery partner more clearly than another sales presentation.