Mastering LLM Integration in the Enterprise: A Comprehensive Guide

Unlock the potential of enterprise AI with effective LLM integration strategies.

Unlock the potential of enterprise AI with effective LLM integration strategies.

Conceptual illustration of LLM integration into enterprise systems.

Conceptual illustration of LLM integration into enterprise systems.

In an era defined by artificial intelligence and machine learning, the integration of Language Models (LLMs) has begun to significantly influence enterprises across various sectors. This primary guide serves to outline the essential aspects of LLM integration in enterprises, demonstrating how you can seamlessly incorporate LLMs into your organization. Our objective is to equip you with a comprehensive toolset that can transform your business operations through enterprise AI integration.

LLMs should be regarded as more than mere add-ons; they are versatile infrastructure tools that, when integrated into your enterprise, can yield numerous benefits. Whether you're seeking advanced features such as predictive text or sentiment analysis, incorporating an LLM will undoubtedly:

This enhancement in capabilities can propel your operations into a new era.

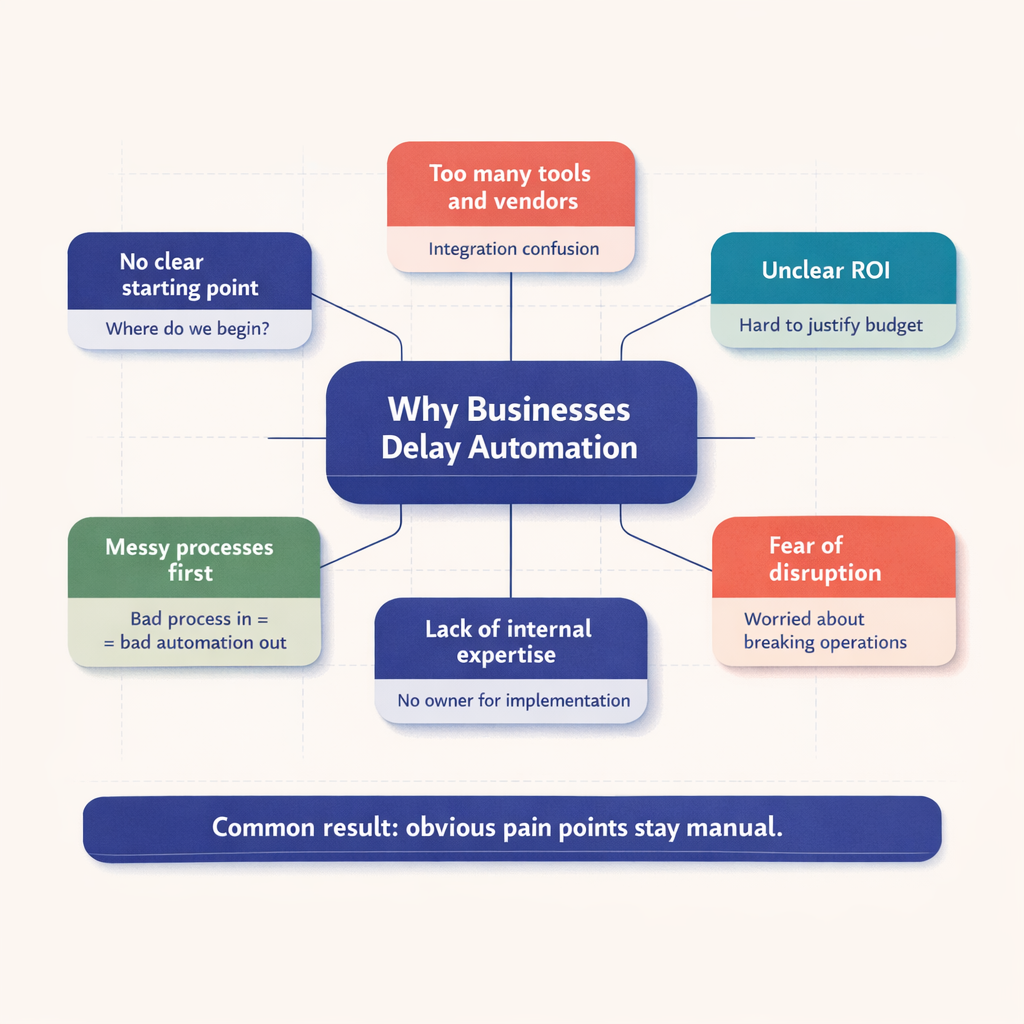

As you work to integrate an LLM into your enterprise, several key integration patterns emerge. It is crucial to take a holistic approach, considering data integration, application integration, and unified interface integrations for seamless operation. The LLM API integration guide offers an in-depth walkthrough.

No discussion of LLM integration in enterprises would be complete without addressing security and architecture. The guide on integrating AI emphasizes that incorporating LLMs into your enterprise architecture not only protects data but also fosters customer trust.

Additionally, cost-effectiveness should be a primary focus. Implementing intelligent cost control measures is essential to offset the substantial investments required for LLM integration. Nevertheless, the resulting improvements in efficiency and scalability far outweigh these costs.

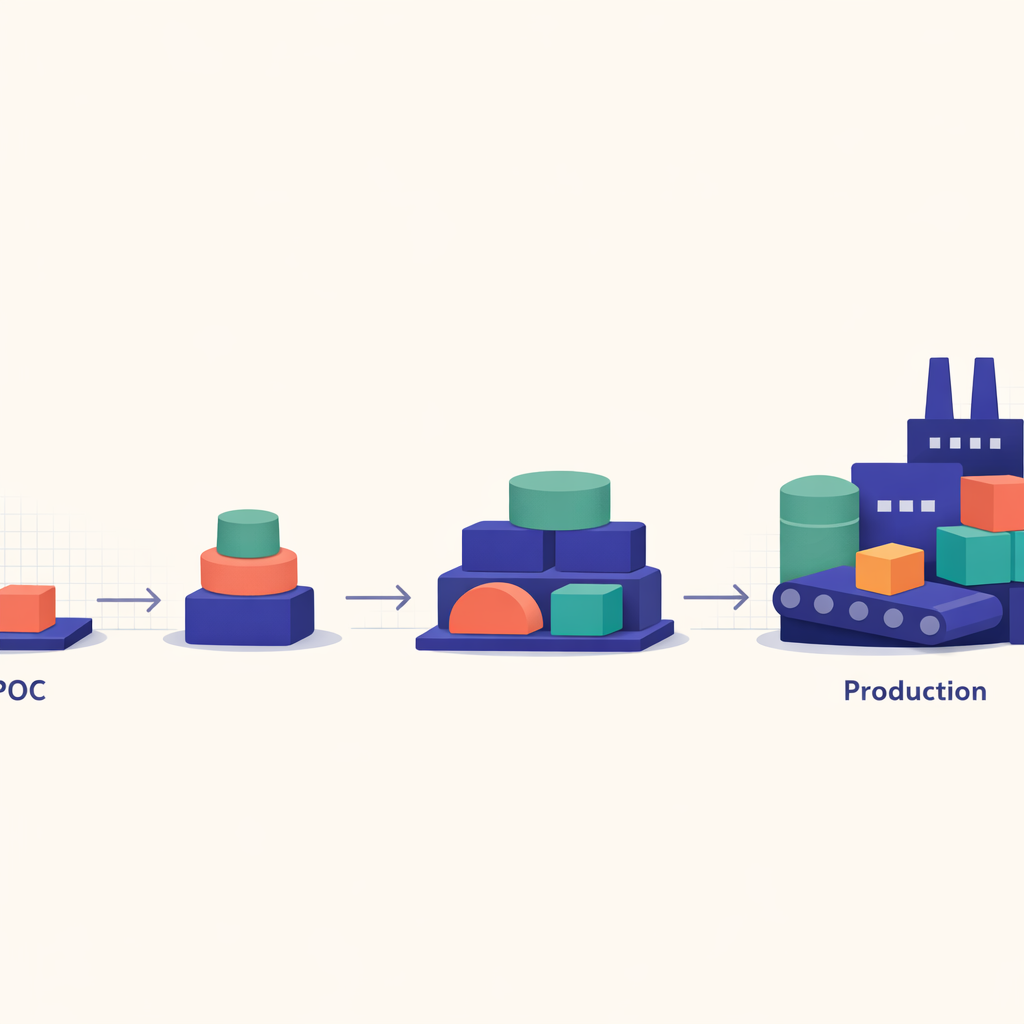

Transitioning from the planning phase to actual implementation can feel daunting. However, like any technological integration, moving from proof-of-concept (POC) to production and conducting thorough testing is critical to ensuring the success of your LLM integration journey.

In the following sections, we will explore more nuanced aspects of LLM integration within enterprises, providing insights you can translate into actionable strategies.

For now, take some time to envision how you might integrate LLMs into your business. Consider drafting potential business scenarios that could benefit from language model integrations. Visit our services page for additional resources on enterprise LLM integration.

Diagram showcasing different LLM integration patterns.

Diagram showcasing different LLM integration patterns.

LLM, or language model integration, serves as a critical asset in the domain of infrastructure tools for enterprises. These advanced models can significantly enhance operations by improving user experiences and facilitating smarter decision-making processes. By leveraging LLMs, your enterprise can achieve transformative benefits, leading to marked improvements in daily operations and ushering in a new era of LLM integration in enterprise operations.

Integrating LLM into your enterprise requires careful consideration of several key integration patterns. The following are essential:

| Integration Type |

|---|

| Data Integration |

| Application Integration |

| Unified Interface Integration |

Each of these integration types plays a vital role in ensuring seamless operations. For a detailed understanding, refer to our guide here.

Additionally, choosing the right LLM involves weighing the trade-offs associated with capacity, speed, and complexity across different models.

The architecture of your LLM and security parameters require prior attention. In today's data-driven landscape, safeguarding your LLM API integration is crucial—not only for protecting sensitive data but also for establishing and maintaining user trust.

These steps are essential for keeping your LLM API secure and functional. For more comprehensive information on securely integrating LLMs into your enterprise structure, refer to our LLM API integration guide.

Cost control is a critical aspect of LLM integration, requiring strategic planning and resource management to avoid excessive expenditures. Coupled with thorough testing of LLM functionality, this approach can ensure a smooth transition from Proof of Concept (PoC) to production.

With these insights, you can facilitate the transformative integration of LLM into your enterprise, elevating operational efficiency, enhancing customer interactions, and fostering smarter decision-making processes—all within a secure framework.

For more expert guidance on this topic, explore our services and integrations.

Ready to leverage the transformative power of LLM integration in your enterprise? Get started today.

Integrating a language model into your enterprise is a multifaceted process that demands strategic planning. It involves comprehending various models, their respective trade-offs, and identifying the optimal integration patterns tailored to your organizational needs. This guide offers crucial insights into the successful implementation of LLM integration at the enterprise level.

When considering LLM integration within an enterprise, three essential patterns should be evaluated:

Data Integration: This pattern emphasizes the consolidation of data from diverse sources into a single, easily accessible format. It ensures that the LLM has access to high-quality data for its predictive models.

Application Integration: This approach interlinks various applications or systems, facilitating seamless data flow. It enhances the LLM's context understanding and simplifies the automation process.

Unified Interface Integration: This pattern centralizes the display of data from multiple applications into a single user interface. It improves user experience and typically leads to quicker resolution of queries.

For a more comprehensive understanding of each of these patterns, please refer to our detailed guide here.

Before integrating an LLM into your enterprise, it is essential to grasp the trade-offs associated with different models. Key considerations include:

Approach this process with your specific use case in mind, and continually measure performance against your goals to refine your strategy.

When integrating an LLM, pay careful attention to the overarching architecture and security measures. Keep the following points in mind:

Initiating LLM integration begins with a well-defined proof of concept (POC). Utilize a small-scale project to evaluate the LLM's capabilities, resolve any implementation issues, and assess interoperability with your existing processes. Once you are content with the results, scale up to production. Remember to continuously review and refine the model, as LLM integration is an iterative journey.

Before we conclude, let's address some common inquiries regarding LLM integration in enterprises:

What advantages does an enterprise gain from LLM integration?

Effective LLM integration provides enterprises with a powerful infrastructural tool that enhances user experiences, streamlines operations, and significantly improves decision-making capabilities.

How can we ensure data security in LLM integration?

Prioritize robust data encryption, secure hosting, and regular security reviews to uphold high levels of data safety. Consulting with an AI integration specialist is also strongly recommended.

What factors should be considered when selecting a model for integration?

The choice of model will depend on your specific objectives and available resources. Key factors include model capacity, speed, and complexity.

Ready to embark on your journey towards LLM integration? For further assistance, check out our detailed LLM API integration guide here.

In enterprise AI integration, the process is rarely straightforward, particularly when incorporating large language models (LLMs). It is crucial to carefully consider the inherent trade-offs associated with different language models. These trade-offs typically center around capacity, speed, and complexity.

When integrating LLMs, aligning the model's capacity with the enterprise's demands is paramount. For instance, a high-capacity LLM may offer advanced functionalities but could overwhelm existing infrastructure if the enterprise's needs are not substantial. Understanding your enterprise's requirements helps in selecting the most suitable LLM model.

Speed is another vital factor in ensuring user satisfaction and operational efficiency. The chosen LLM should be capable of delivering real-time solutions without hindering the enterprise's workflow.

Lastly, the complexity of the LLM presents both challenges and benefits. While complex LLMs can offer detailed insights and nuanced analyses, their intricate requirements may pose hurdles to seamless integration.

The importance of secure LLM API integration cannot be overstated. Collaborating with a reputable AI integration specialist can help ensure the protection of user data and maintain user trust. For more information on secure integration, please visit our services page.

The architecture of the LLM and its compatibility with the enterprise's existing systems must be carefully evaluated. A misalignment can lead to costly adjustments. It is essential to have a well-structured LLM API integration guide to navigate this effectively.

Cost control is a matter of efficiency. Select an LLM designed to add value and streamline operations without incurring excessive expenses.

Additionally, consider progressing from proof of concept (POC) to production. Comprehensive testing is crucial to identify integration issues and optimize the LLM's compatibility with the enterprise infrastructure.

What factors should we consider when choosing an LLM?

How critical is security in LLM integration?

Are all LLMs the same?

In conclusion, while the journey to successful LLM integration in an enterprise can present various challenges, a solid understanding of model trade-offs and careful planning can significantly streamline the process. Explore more about integrating LLMs in the enterprise on our services page.

Achieving secure and resilient LLM integration in an enterprise setting requires more than just data protection; it is essential to build trust among stakeholders and ensure that business operations remain immune to external threats.

One critical factor in ensuring secure LLM integration is hosting the model in a safe environment. Storing your model in a secure setting protects your data from potential cyber hazards, thereby enhancing the trust of your customers and partners. Partnering with an experienced enterprise AI integration specialist who understands the intricacies of a secure hosting environment can be invaluable.

Along with secure hosting, robust encryption is vital. Encryption protects sensitive information by converting it into code, making it inaccessible to cybercriminals. Implementing a sophisticated encryption scheme is essential to ensure the secure transmission of data between the LLM and the broader enterprise.

Cost control is another significant consideration when integrating LLM into an enterprise. Given the potential expenses associated with AI integration, it is important to:

The importance of thorough testing in the LLM integration process cannot be underestimated. Regularly conducting in-depth testing and validation of your LLM API integration helps identify potential issues early, reducing both downtime and reputational damage.

From POC to Production

Transitioning your LLM integration from proof of concept to production necessitates a comprehensive strategy. Consult our LLM API integration guide for an in-depth walkthrough. Focus on scalability, maintainability, and continuous improvement to ensure the long-term success of your LLM integration.

FAQs

Key factors include security measures (such as secure hosting and robust encryption), cost control, comprehensive testing, and a smooth transition from proof of concept (POC) to production.

Partnering with an experienced enterprise AI integration specialist and implementing secure hosting environments along with robust encryption methods is crucial.

Comprehensive testing is essential for identifying potential issues early, thus reducing both downtime and reputational damage.

To achieve successful enterprise-grade product integration, contact our experts at GPT Integration services today! We offer platforms and solutions that ensure seamless and secure LLM integration. Let’s work together to propel your business towards resiliency and a competitive edge!

Technical illustration depicting the transition from POC to full-scale production in LLM integration.

Technical illustration depicting the transition from POC to full-scale production in LLM integration.

Controlling costs during the integration of large language models (LLMs) into an enterprise is a crucial undertaking. It is essential that the advantages gained from this integration are not overshadowed by excessive expenses. By incorporating cost control strategies into the LLM integration process, enterprises can achieve an AI integration that is both effective and financially sustainable.

It is important to recognize the various factors that may impact your cost control strategies during LLM integration. Generally, these factors fall into three main categories:

| Factor | Description |

|---|---|

| Integration Complexity | The complexity of the integration directly influences associated costs. Implementing cost-effective strategies—such as identifying essential AI tools and limiting customization—can significantly lower expenses. |

| LLM Model Requirements | The costs of integration vary based on the model's capacity, speed, and complexity. Integrating a high-capacity and complex model may necessitate additional resources, leading to increased costs. |

| Security Measures | Ensuring robust security may require implementing layers of data encryption, secure hosting, and ongoing auditing of security protocols, all of which involve their own costs. |

Adopting a cost-efficient strategy during the LLM integration phase is essential. Here are three practical methods to consider:

Understanding how to integrate LLMs into the enterprise is vital for financial effectiveness. For more in-depth information, be sure to explore our comprehensive LLM API integration guide.

: The Enterprise Guide to LLM Integration, Post 14.

Testing an enterprise's LLM integration is essential for ensuring a successful implementation. While the integration process can be extensive, thorough testing is critical for providing insights into key aspects such as data handling, functionality, and system responsiveness. Below are the vital steps you should incorporate into your routine for testing LLM integration.

Before starting the testing process, it's crucial to establish clear goals. These objectives will guide your testing efforts and serve as the foundation for your key performance indicators. Examples of objectives may include enhancing customer interactions or improving predictive text capabilities.

Select a testing technique that aligns with your established objectives. Key factors to consider include the nature of your enterprise's infrastructure and the expected interactions between the LLM and other systems.

Conduct testing of your LLM integration in various environments to obtain a comprehensive view of its performance. This may involve testing in different locations, on various devices, or under diverse loads and stresses.

Since your LLM integration may handle sensitive information, ensuring robust security is paramount. This necessitates a comprehensive LLM API integration guide that safeguards all data within the LLM platform.

During testing, enterprises must be prepared to make challenging decisions, including the possibility of balancing speed, capacity, and complexity based on the demands of your AI integration.

As a final step, assess all testing results and recommendations to make necessary adjustments. Proper documentation not only facilitates informed decision-making but also supports your enterprise's growth trajectory.

When these steps are carefully executed, they will validate the reliability of your LLM system, ensuring a smooth transition from POC to full-scale production. If you encounter any uncertainties, do not hesitate to seek professional guidance.

For more insights on LLM integration in an enterprise environment, visit our comprehensive guide here [/services/gpt-integration].

FAQs

What are common challenges in testing LLM integration?

Given the multiple moving components, challenges may arise in data handling, navigating the complexities of the infrastructure, and implementing robust security measures to protect sensitive information.

How long does the testing process take?

The duration of the testing process largely depends on the complexity of the LLM system being integrated, the expected data volume, and specific enterprise requirements.

Can I skip the testing process?

Skipping the testing phase can lead to vulnerabilities within your system, subpar performance, and potential loss of sensitive data. Hence, it is a necessary and vital process for successful LLM integration.

For a comprehensive guide to LLM integration, visit our services page [/services/gpt-integration].

Transitioning from a proof of concept (POC) to full-scale production in LLM integration marks a significant milestone in your company's AI journey. This step represents the shift from evaluating theoretical benefits to realizing tangible rewards. However, this process requires meticulous planning to effectively integrate LLM into your enterprise.

Before advancing from POC to production, it is crucial to identify potential risks and develop strategies for mitigation. These risks may include:

Patterns that functioned seamlessly during your proof of concept may not perform as effectively in large-scale production. It is essential to review and refine these integration patterns prior to transitioning to production.

Ensuring that your enterprise architecture is equipped to accommodate the LLM integration is vital. This preparation involves making adjustments for scalability, implementing security upgrades, and enhancing the APIs that facilitate the integration.

Providing training for end-users to leverage the advantages of your new LLM integration can significantly impact your return on investment (ROI). It is important to plan and execute this training well in advance of the transition from POC to production.

Understanding the concerns or questions your team may have during this transition is crucial. Here are some commonly asked questions:

What indicators should we look for to know we're ready to move from POC to production?

How long should the transition from POC to production take?

How should we handle potential cost escalations during the transition?

In conclusion, integrating enterprise LLM from POC to production necessitates careful planning, risk identification, and user training. An enterprise AI specialist can assist you through all stages of your LLM API integration. Trust their expertise to make your LLM integration journey smoother and more efficient.

In this section, we will address the three most common questions that professionals frequently ask when considering the integration of LLMs into their enterprise.

When integrating LLMs, architectural considerations depend on your company's existing systems and future AI ambitions. The LLM API integration guide emphasizes that the architecture should be scalable and robust to accommodate the intensive computational demands of LLMs. Consider adopting viable structures such as microservices or event-driven architectures. It's essential to ensure that the architecture aligns with your available resources and complexity while also weighing model trade-offs for optimal efficiency.

Effective cost control for LLM integration within an enterprise requires proactive budgeting, ongoing expense tracking, and forecasting potential cost overruns. Collaborating with a financial advisor is advisable to ensure accurate budgeting and appropriate weightings of different components within your budget. Regularly monitoring usage and performance can help identify and address costly inefficiencies before they escalate.

Ensuring security during enterprise AI integration necessitates a comprehensive approach. Implementing robust encryption methods, secure data handling practices, and adhering to compliance regulations are critical steps. A dedicated team of security experts should oversee the LLM integration to promptly address any vulnerabilities.

In conclusion, integrating LLMs into an enterprise is a strategic initiative that can transform your operations and provide a competitive advantage. This process entails establishing the right infrastructure, understanding model trade-offs, ensuring security, designing an appropriate architecture, and maintaining effective cost control.

For a more detailed discussion on LLM integration and other related services, reach out through this link. We are ready to guide you through your LLM journey.

Conceptual image of an optimized LLM integration strategy.

Conceptual image of an optimized LLM integration strategy.

As we wrap up our exploration of the enterprise guide to LLM integration, it is evident that incorporating LLM into your business strategy can significantly transform your enterprise infrastructure. This strategic move can enhance customer interactions and facilitate more informed decision-making.

The process of integrating LLM into an enterprise involves several essential stages. Consider the following key components:

Engaging an experienced enterprise AI integration specialist can significantly enhance the LLM integration process. Their expertise ensures secure hosting and robust encryption—essential elements for fostering trust with your users.

Successfully integrating Enterprise AI, including LLM, necessitates a careful balance of technology adoption, cost management, efficient testing procedures, and integration strategies—all of which are detailed in our LLM API integration guide. The ultimate aim is to create an enterprise that is smarter, more efficient, and highly responsive to customer needs.

Wondering what to do next? Begin by revisiting the key insights and unique perspectives shared in our blog posts, keeping in mind that the LLM integration journey can be manageable. By collaborating with the right experts and being attentive to trade-offs, cost control, and security, you will be well-positioned to successfully integrate LLM into your enterprise.

Take the next step with us and explore our GPT integration services, an excellent opportunity to advance your journey towards successful enterprise AI integration.