AI Agent Security Compliance: Navigating SOC 2, HIPAA, and More

Ensuring Secure AI Agents Through Compliance & Risk Management Strategies

Ensuring Secure AI Agents Through Compliance & Risk Management Strategies

Abstract representation of AI security compliance with indigo and warm coral geometric elements

Abstract representation of AI security compliance with indigo and warm coral geometric elements

In today’s data-driven digital landscape, Artificial Intelligence (AI) has emerged as a crucial technology across various sectors, including healthcare and financial services. While AI offers transformative benefits, ensuring the security compliance of AI agents presents distinct challenges. Successfully deploying AI agents in a secure manner requires a comprehensive understanding of potential threats, the implementation of robust security patterns, and strict adherence to compliance regulations.

AI agents play a pivotal role in numerous organizations, responsible for processing, storing, and transmitting sensitive data with high precision and efficiency. However, this significant responsibility introduces various risks—such as data breaches, prompt injections, and hallucinations—that can result in severe consequences for systems.

Three prominent types of risks are particularly concerning in the AI security landscape:

Recognizing these risks underscores the urgent need for secure AI agents.

Within the realm of compliance regulations, System and Organization Controls 2 (SOC 2 AI), Health Insurance Portability and Accountability Act (HIPAA), and General Data Protection Regulation (GDPR) are particularly significant:

| Compliance Standard | Description |

|---|---|

| SOC 2 | Establishes stringent security requirements across five trust principles: system security, availability, processing integrity, confidentiality, and privacy. |

| HIPAA | Focuses on protecting sensitive data within the healthcare sector and for entities handling financial or personal information. |

| GDPR | Safeguards data privacy while extending its protections to any AI system interacting with EU citizens. |

By adhering to these standards, organizations can foster trust and demonstrate a strong commitment to safeguarding sensitive data.

In our rapidly advancing digital landscape, AI security presents a complex challenge, especially in sectors that mandate strict regulatory compliance, such as SOC 2 and HIPAA. AI agents routinely process, store, and transmit sensitive data, making it imperative to implement robust safeguards to prevent data breaches, prompt injections, and hallucinations—common risks that could result in unreliable or harmful outputs.

A data breach occurs when unauthorized individuals gain access to sensitive data. Given the extensive reliance on data in AI systems, this risk is particularly significant, potentially resulting in violations of HIPAA compliance standards and SOC 2 trust principles.

Prompt injections represent another critical risk in AI. This occurs when malicious actors manipulate inputs to AI models, which can lead to the generation of harmful outputs. This threat highlights the necessity of validation—a vital safeguard in Language Learning Models (LLM).

Hallucinations refer to instances where an AI model produces misleading or incorrect information. These occurrences can result in erroneous decision-making, potentially inflicting substantial harm on systems reliant on AI-generated outputs.

Addressing these security risks necessitates the application of security patterns such as isolation, logging, and strict access control. Implementing an isolation pattern can prevent unauthorized access and mitigate prompt injections before they occur, while logging enables monitoring and rapid response to potential security incidents. Access control diminishes the risk of data breaches by restricting access to sensitive information only to authorized users.

Moreover, adopting safeguards for LLMs—such as filtering Personally Identifiable Information (PII) and employing validation techniques—further mitigates these risks. PII filtering protects privacy by ensuring that sensitive information—like names, addresses, or Social Security numbers—is not inadvertently included in outputs. Validation processes are particularly effective in averting prompt injections, thereby enhancing the security and compliance of AI agents.

When evaluating AI vendors, consider employing a checklist that focuses on the following aspects:

Q: How can I begin implementing measures for enhanced AI security?

A: Start by understanding the potential risks associated with AI and identifying which data require protection. Then, implementing security patterns such as isolation, logging, and access control can greatly enhance overall security.

Q: What should be included in my vendor checklist for AI security?

A: The checklist should assess the vendor's compliance with relevant regulations like SOC 2 and HIPAA, their existing security measures, and their capacity to respond to breaches promptly and effectively.

Q: How does PII filtering function in the security landscape?

A: PII filtering safeguards AI privacy by ensuring that personally identifiable or sensitive information is not unintentionally included in data outputs.

In conclusion, while the benefits of AI are undeniable, they do not negate the substantial risks associated with processing sensitive data. Incorporating these insights into your AI development processes, along with ensuring stringent regulatory compliance, is essential for establishing a secure and effective AI enterprise.

Artificial Intelligence (AI) has emerged as a cornerstone across various sectors, fundamentally transforming the landscape of data management. Consequently, it is vital to understand the significance of regulatory frameworks, specifically SOC 2 and HIPAA, in ensuring AI security compliance. These regulations play a critical role in defining the parameters of AI security, safeguarding sensitive data while ensuring adherence to privacy standards.

SOC 2 standards are essential for securing AI agents that manage protected data. Based on five trust principles—system security, availability, processing integrity, confidentiality, and privacy—SOC 2 establishes stringent security measures. This framework assists enterprises in mitigating risks associated with data breaches, prompt injections, and hallucinations. Thus, compliance with SOC 2 offers companies a robust structure to:

| Security Focus | Implications |

|---|---|

| Mitigate data breaches | Reduces potential data loss and associated costs |

| Bolster security infrastructure | Enhances overall data protection mechanisms |

| Reduce economic damage | Protects against financial losses and reputational harm |

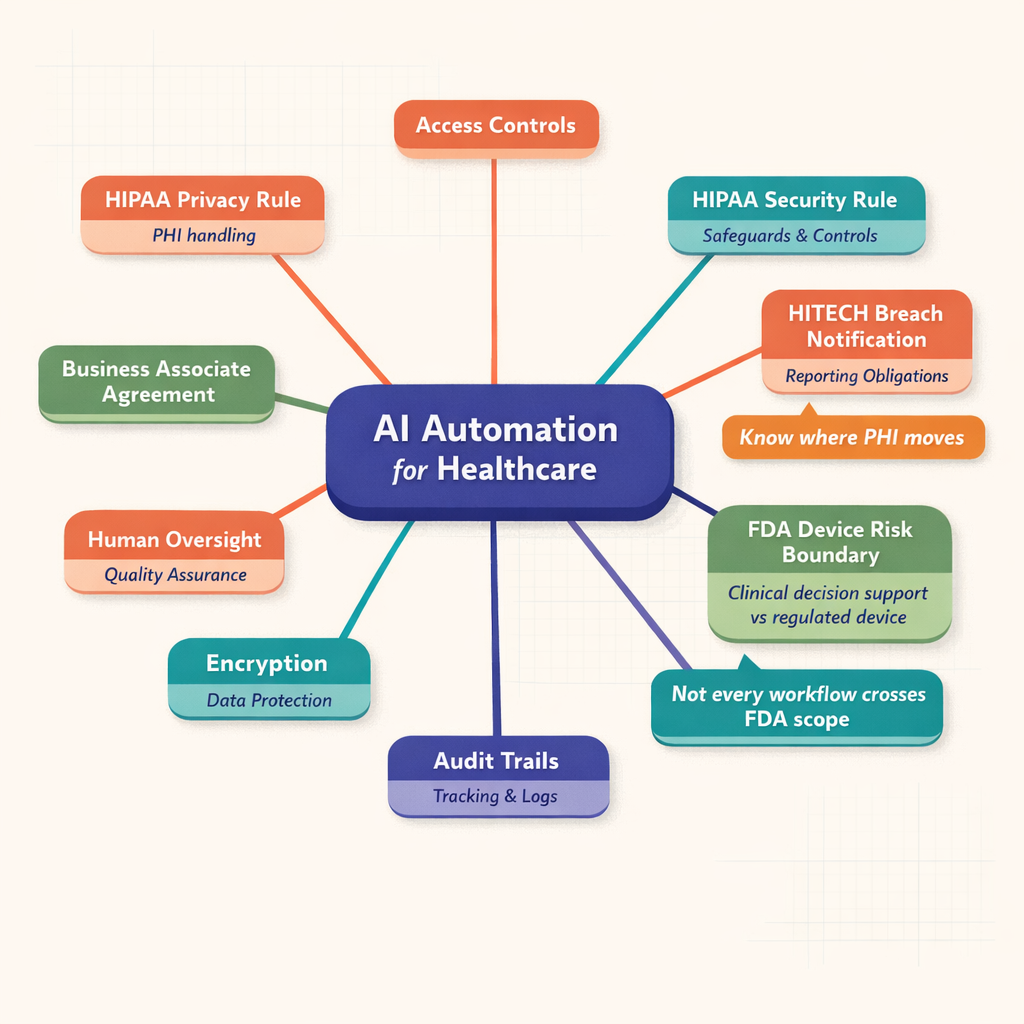

In parallel, the Health Insurance Portability and Accountability Act (HIPAA) is pivotal for AI security in sectors such as healthcare, where handling sensitive patient data is commonplace. Ensuring HIPAA compliance minimizes the risk of unauthorized access or disclosure of protected health information. This legislation mandates enterprises to implement a variety of security measures, including:

The intersection of SOC 2 AI and HIPAA compliance offers a comprehensive approach to AI agent security, effectively addressing the threat landscape that encompasses data leakage, prompt injection, and hallucinations. Implementing these security patterns and lowering language model (LLM) vulnerabilities are significant steps towards creating a more secure AI enterprise environment.

While achieving these regulatory standards may not be a straightforward process, it is crucial to recognize that compliance transcends mere legal obligations; it is a testament to your commitment to the security and privacy of users' sensitive data. In the subsequent sections, we will provide a practical vendor checklist and FAQs to assist you in navigating this process. In conclusion, understanding and integrating SOC 2 and HIPAA compliance within AI systems is a sound business strategy—an investment in the long-term credibility and safety of your enterprise.

Ensuring security compliance for AI agents within an AI security enterprise is crucial, necessitating the implementation of robust security patterns and practices. Key focus areas in securing AI agents include isolation, logging, and access controls. Effectively applying these patterns can assist organizations in achieving HIPAA compliance, SOC 2 AI compliance, and adherence to various other regulatory frameworks.

Isolation involves segregating different components of the AI system and limiting their interactions to mitigate potential vulnerabilities. For example, an AI processing unit may be isolated from other network systems to prevent exploitation through connectivity that could compromise data. This approach enhances security for AI agents by significantly reducing the risk of data leakage and attacks.

Logging is vital for securing AI agents, as it entails tracking every operation performed by the AI system. This practice can help identify unusual patterns indicative of security threats. Effective logging highlights the importance of regular auditing for anomalies and maintaining an evidence trail for potential investigations.

Access control is a foundational security pattern designed to manage who or what can view or utilize resources within an AI network. Access should be granted strictly on a need-to-know basis to minimize the attack surface. For instance, a data scientist may not require access to raw PHI (Protected Health Information), which must comply with HIPAA regulations.

Implementing these practices involves employing Least Likelihood Mechanisms (LLM) safeguards, such as filtering Personally Identifiable Information (PII) and validating user input prior to processing. Utilizing a vendor checklist can streamline adherence to these methodologies by providing a systematic approach to securing the deployment of AI agents.

In conclusion, securing AI agents to ensure compliance requires the application of essential security patterns, including isolation, logging, and access controls, while adhering to frameworks such as HIPAA and SOC 2 AI. Focused efforts in these areas can significantly diminish AI-related risks, safeguarding the enterprise's reputation and, ultimately, the data subjects they serve.

Three abstract symbols representing Isolation, Logging, and Access Control security patterns

Three abstract symbols representing Isolation, Logging, and Access Control security patterns

Abstract version of a checklist with tick marks in indigo and warm coral

Abstract version of a checklist with tick marks in indigo and warm coral

The advancements in AI have necessitated sophisticated security measures to ensure the privacy and safety of the data it handles. Latent language models (LLMs) have emerged as significant safeguards, employing mechanisms such as Personally Identifiable Information (PII) filtering and validation to protect sensitive data and ensure compliance with AI security regulations.

PII encompasses any data that could potentially identify a specific individual. LLM safeguards utilize PII filtering to detect and manage such instances, ensuring that sensitive information is neither processed nor stored without appropriate protections. In contrast, PII validation verifies the integrity and authenticity of data before it is processed by the AI agent.

In an AI security context, robust PII filtering and validation mechanisms mitigate the risk of data breaches, prompt injections, and hallucinations. These measures actively screen and validate data, significantly reducing the likelihood of a breach or violation of AI privacy policies.

Moreover, they play a critical role in ensuring compliance with regulations such as SOC 2, HIPAA, and GDPR. Below is a summary of compliance requirements:

| Regulation | Compliance Requirement |

|---|---|

| SOC 2 | Implementing data privacy and protection controls that adhere to the trust principle of confidentiality |

| HIPAA | Safeguarding sensitive patient information, which can be achieved through effective PII filtering |

| GDPR | Compliance with data protection guidelines applicable to EU citizens |

Furthermore, Understanding GDPR emphasizes its scope concerning EU citizens; PII filtering and validation are essential for adhering to the data protection guidelines of this regulation. Following these guidelines helps maintain user trust and demonstrates the enterprise's commitment to ensuring AI privacy.

Given the importance of these safeguards in your AI system, consider asking vendors of AI solutions the following:

By effectively implementing and managing these crucial safeguards, businesses can not only ensure the security of their AI agents but also reinforce their commitment to maintaining and enhancing the privacy and security of user information.

Choosing a vendor to support your AI security enterprise necessitates thorough due diligence to ensure compliance with AI agent security standards. To assist in this evaluation, consider the following checklist:

SOC 2 Compliance: Does the AI vendor comply with SOC 2 requirements? This standard is crucial in today's digital environment, as it addresses the security, availability, processing integrity, confidentiality, and privacy of customer data.

HIPAA Compliance: If your business operates in healthcare or any sector that manages sensitive health information, it is essential to choose an AI vendor that can demonstrate strict adherence to HIPAA regulations.

GDPR Compliance: Any engagement with citizens of the European Union requires GDPR compliance from the AI vendor. The vendor’s commitment to data protection and privacy regulations ensures the ethical and lawful handling of your customer data.

Secure AI Agents: Can the vendor guarantee the security of their AI agents against potential threats, including prompt injection, data leakage, and hallucination?

Security Pattern Implementation: Is the vendor capable of implementing established security patterns, such as isolation, logging, and access control, within their AI operations?

LLM Safeguards: Does the AI vendor employ Latent Language Model (LLM) safeguards, including PII filtering and validation mechanisms, to protect sensitive information?

Selecting an AI vendor that meets these criteria will help establish strong data protection standards within your organization and ensure ongoing compliance with essential regulatory frameworks.

Navigating the landscape of AI security and compliance can be challenging. Below are answers to some commonly asked questions regarding this important subject:

What is AI agent security compliance?

AI agent security compliance refers to the implementation of robust security measures and adherence to regulatory frameworks such as SOC 2 and HIPAA. These measures are designed to safeguard sensitive data processed by AI agents from potential risks and threats.

Why is HIPAA compliance important in AI security for enterprises?

HIPAA compliance is essential within the healthcare sector, as it requires the protection of personal health information. Non-compliance can lead to significant penalties.

How does SOC 2 influence data security in AI?

SOC 2 standards play a critical role in managing protected data. They impose stringent security measures based on five trust principles:

Three colored question marks symbolizing frequently asked questions

Three colored question marks symbolizing frequently asked questions

In the rapidly evolving landscape of AI security for enterprises, adherence to regulatory frameworks such as SOC 2 AI and HIPAA compliance is essential. Achieving successful AI agent security compliance provides a robust defense against potential threats, enhancing data confidentiality and improving the overall integrity of processed information.